Using speech recognition to program by voice alone.

My Vocola3 grammars were created out of necessity. To make a long story short, in 2016 I badly injured my wrists and was unable to use a computer at all for several months. Even once I recovered, programming seemed like an impossibility due to the pain of sustained typing. But, after seeing a presentation by Tavis Rudd, I decided to give voice programming a try, and it worked like a charm.

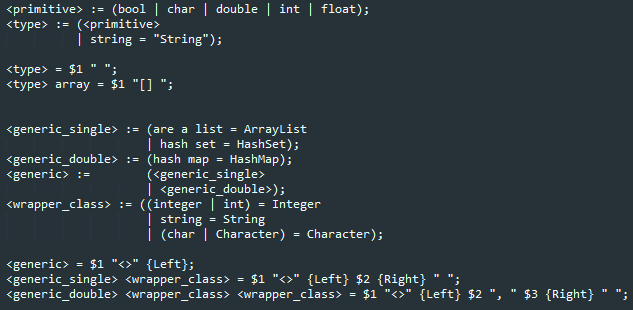

This project is composed of a bunch of grammars that parse spoken English words and other phonemes into keyboard commands or Python, Java, or web code. Effectively, the result is a language midway between spoken English and written code. The grammars are implemented in the Vocola 3 scripting language, and run on the standard Windows Speech Recognition app included on all Windows machines. I’ve written grammars for 3 levels of use-case:

- General dictation and everyday Windows use

- Interfacing with the specific apps I use, such as bash, git, and my IDEs

- Programming grammars for Python, Java, and front-end web development

These grammars are dynamically loaded and unloaded as needed, and allow me to use my voice for most of what I used to do with my hands (albeit somewhat slower). For example, if I wanted to write this Java code:

Map<Character, Integer> frequency = new HashMap<Character, Integer>();

for (char letter : alphabet.toCharArray()) {

frequency.put(letter, 0);

}

I’d say:

map char int frequency qual new hashmap char int funk doop

slap 2

for char letter face alphabet to char array camel 3 dottie 2 funk

body

frequency put dottie

funk letter swipe null doop

It might sound silly, but it gets the job done. I decided to share the project on GitHub to help other injured or disabled programmers in a similar situation to mine. The code is kind of a mess, since the project is under constant development as I tweak my grammars to handle each new situation I run into. But, I’m working on refactoring it into a more readable and coherent form.